AEO Needs Good Bots—But Your WAF Might Be Blocking the Wrong Ones

For marketing and PR managers, this creates a critical dilemma. How do you allow verified “good bots” such as Googlebot, Bingbot, and select legitimate AI agents to access the right public content to drive leads and strengthen brand presence, while keeping malicious scrapers and impersonators firmly out?

Most websites still rely on WAFs or basic bot controls that force binary decisions: allow or block. These tools rely on patterns or IP lists, but they struggle with the grey-zone bots that dominate today’s crawlscape—agents that are neither clearly helpful nor outright malicious. Think ambiguous crawlers, research bots, or emerging AI tools that mimic legitimate behavior but can quickly turn abusive if misconfigured or hijacked.

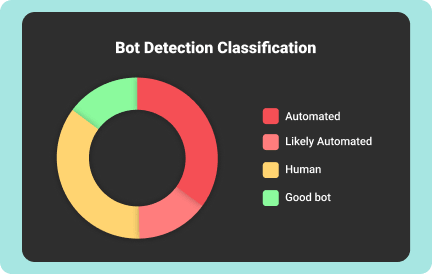

Block too aggressively and you risk hurting your own AEO visibility and rankings. Allow too freely and competitors can scrape your pricing, clone gated content, or overload your infrastructure. Traditional defenses aren’t built for this kind of ambiguity. Marketing teams need intent-based control—the ability to classify bots by behavior and decide access at the content level. That’s where visitor-level visibility becomes a game-changer.

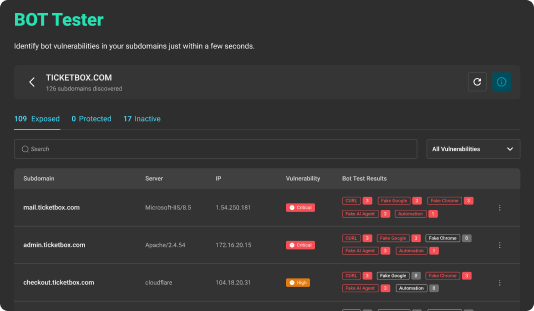

Test your site now: